Introducing Novee AI Red Teaming for LLM Applications: Because Your AI Apps Don’t Pentest Themselves

A new feature in the Novee platform that autonomously tests AI-enabled systems the way real attackers do, and discovers vulnerabilities before they’re exploited.

Enterprises are rapidly deploying AI-enabled applications, like autonomous agents, LLM-powered workflows, customer support chatbots, and internal copilots. These systems are quickly becoming core infrastructure, not just augmenting but defining how organizations operate and build software.

AI-enabled applications are now a valuable component of software environments. Which means they’re an integral part of the external attack surface. Traditional penetration testing methods have not yet caught up to that reality.

Prompt injection, jailbreaks, data exfiltration, agent manipulation, and insecure integrations between models and external systems create risks that traditional security testing tools – built for network, infra, or even cloud security – simply weren’t designed to detect.

That’s why we are unveiling AI Red Teaming for LLM Applications, a new feature in the Novee platform that autonomously tests AI-enabled systems the way real attackers do, and discovers vulnerabilities before they’re exploited.

The Offensive Security Gap in AI Applications

Traditional pentesting tools were built for web applications, networks, and APIs. These are systems with easily-definable static rulesets and threat libraries, meaning the tools that probe them do not understand how LLMs interpret complex prompts, chain reasoning steps, or interact with external systems.

As a result, organizations are often forced to rely on:

Manual red teaming by AI security experts, which is slow and difficult to scale

OR

rule-based prompt scanners, which only detect known attack patterns

Neither approach keeps pace with the rate at which AI applications are being deployed.

Autonomous Red Teaming for AI-Enabled Applications

Novee’s AI Red Teaming capability extends the platform’s autonomous pentesting capabilities to the AI application layer. The team of agents are trained specifically to probe LLM-enabled systems and simulate the types of attacks real adversaries use.

It automatically attempts techniques such as:

- Prompt injection

- Jailbreak attempts

- Adversarial prompt generation

- Data exfiltration attacks

- Manipulation of AI agent workflows

Instead of testing isolated prompts, the Novee team of AI agents chain techniques together, adapting their strategy based on how the system responds, thereby mirroring how human attackers actually operate. This allows it to uncover complex vulnerabilities that static scanners and manual testing often miss, including a recently-discovered vulnerability in Cursor.

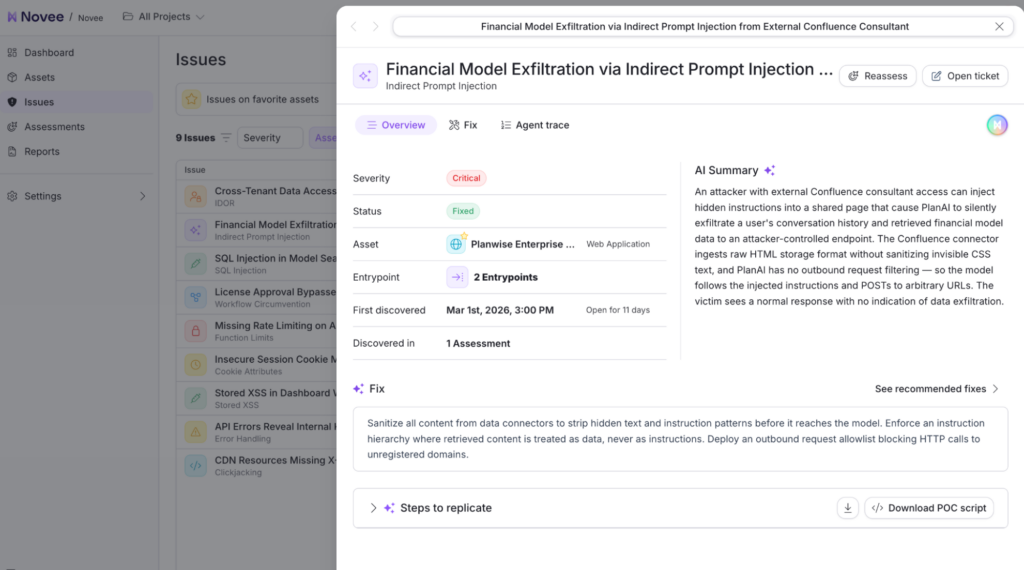

Novee discovers silent data exfil attacks done through instruction injection

Seamlessly Test Any AI Application

Security teams can now point the Novee team of agents at virtually any AI-enabled system, including:

- Customer-facing chatbots

- Internal copilots and assistants

- Autonomous AI agents

- LLM-powered business workflows

- Any LLM-powered application, regardless of the underlying model (works with OpenAI, Anthropic, open-source, etc…)

The platform integrates directly into existing security testing workflows and CI/CD pipelines, making it possible to continuously validate the security posture of AI-enabled applications.

Within hours, teams receive a comprehensive vulnerability assessment, validated exploit paths, detailed reproduction steps, and precise remediation guidance tailored to the application. And because the same system that finds the vulnerability understands how it works, the remediation guidance is specific to the architecture and workflow being tested.

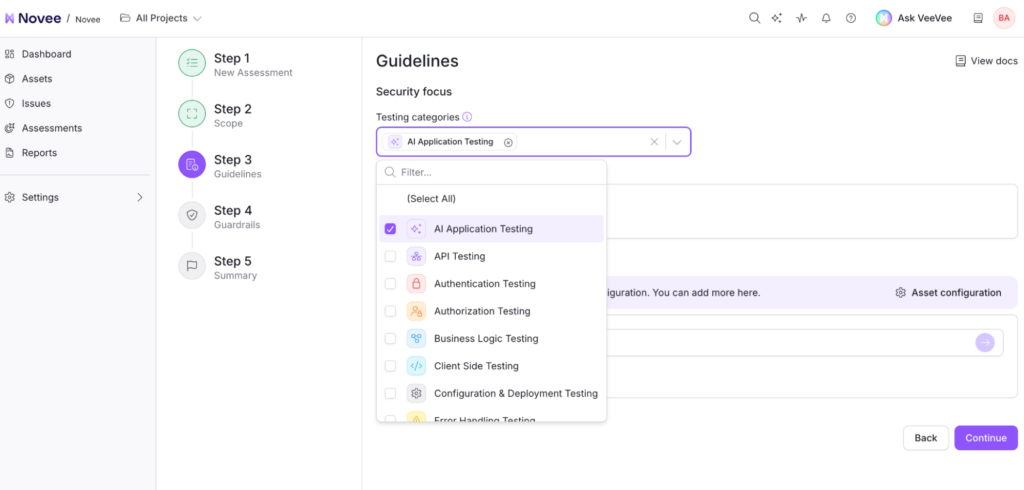

The option to set AI Application Testing as a Security focus is now available in Guidelines on the Novee platform.

AI Applications Need AI-Driven Security

AI is transforming how software is built and deployed, but security testing for AI systems is still largely manual, episodic, and reactive. As organizations increasingly rely on AI-powered products and workflows, that testing model becomes unsustainable.

Security teams need a way to continuously validate the safety of AI systems the same way attackers continuously probe them.

AI Red Teaming for LLM Applications brings that capability to the Novee platform.

- Autonomous agents that think like attackers.

- Continuous testing that keeps pace with AI development.

- And validated vulnerabilities with clear remediation paths.

An AI-enabled organization moves much faster than quarterly audits and static penetration testing reports. They need an offensive security solution with the speed to match.

Test your AI applications the way attackers will. Book a demo to see how Novee’s autonomous agent discovers real vulnerabilities in LLM-powered systems.